Problem

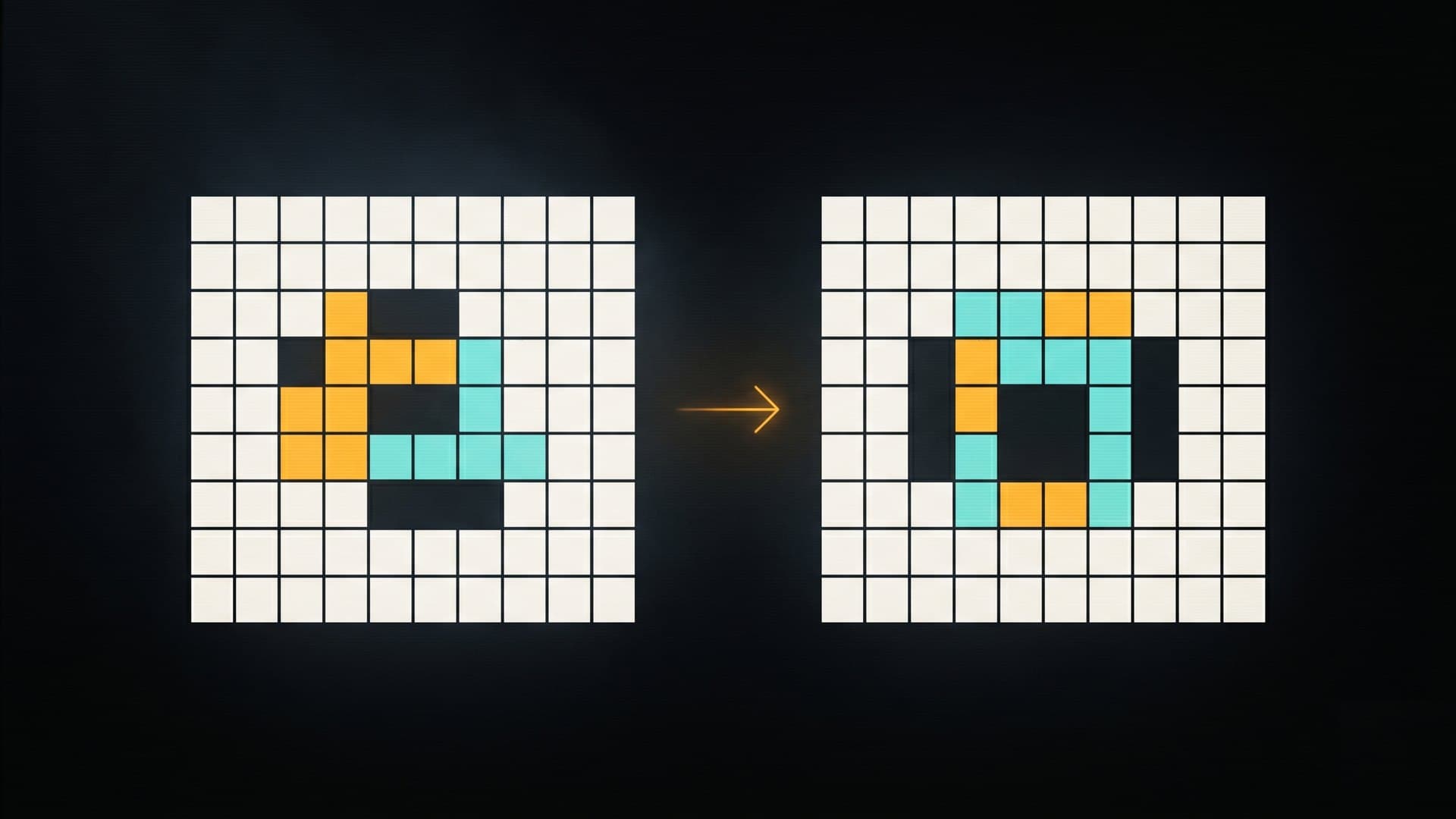

ARC tasks are exact-grid puzzles where 95% pixel accuracy is 0% on ARC. Train a small base model to actually solve them, not approximate them.

Fine-tuning Qwen2.5-1.5B on the abstract reasoning corpus.

ARC tasks are exact-grid puzzles where 95% pixel accuracy is 0% on ARC. Train a small base model to actually solve them, not approximate them.

End-to-end QLoRA pipeline on Qwen2.5-1.5B-Instruct: 4-bit nf4 with double-quant, LoRA r=16 alpha=32 across full attention + MLP. Built the dataset from scratch: 400 training tasks expanded to 50 augmented variants each, prompt/completion JSONL with token-budget filtering. Wrote a strict ARC evaluator that reports exact_match, parse_failures, and shape_mismatches separately.

Production run on A100 40GB. Hardware-aware configs documented for T4 (16GB) through H100 / G4 Blackwell. Cosine LR schedule, warmup 0.03, checkpointing every 100 steps to survive Colab timeouts.

1 epoch in ~28 minutes on A100. Train loss 0.4801 to 0.07586. Strict-grader taxonomy let me diagnose failure modes (shape vs parse vs content) instead of guessing.